3D Semantic Label Benchmark

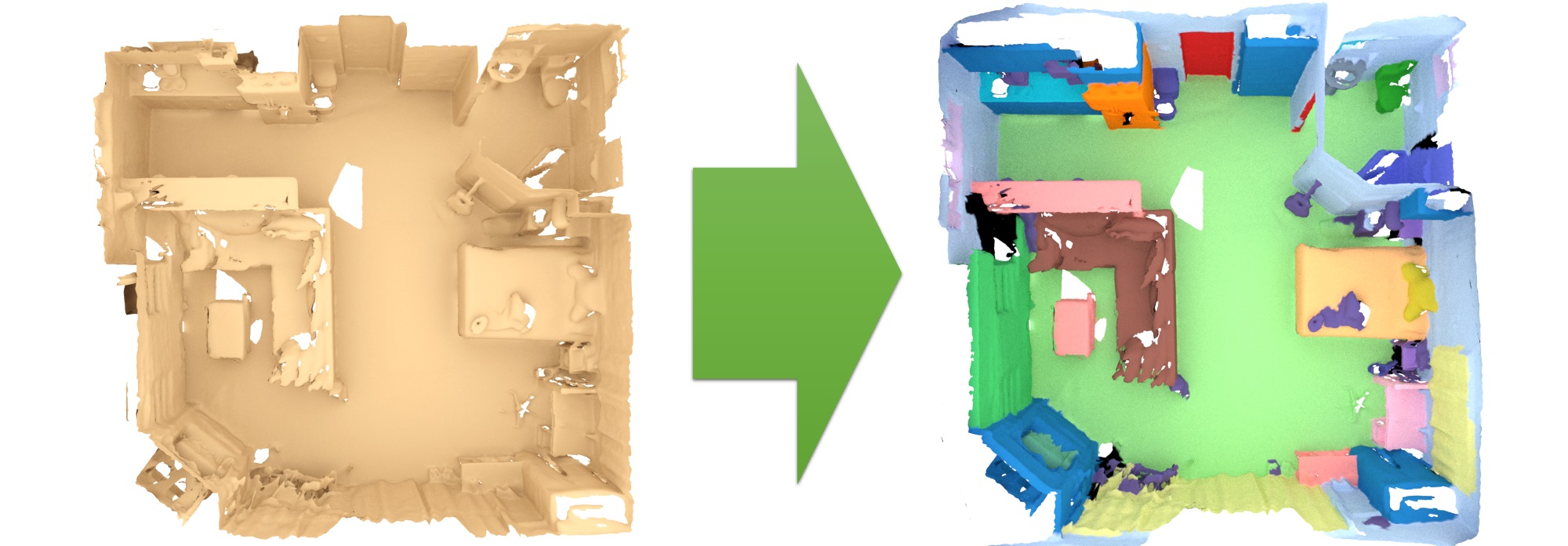

The 3D semantic labeling task involves predicting a semantic labeling of a 3D scan mesh.

Evaluation and metricsOur evaluation ranks all methods according to the PASCAL VOC intersection-over-union metric (IoU). IoU = TP/(TP+FP+FN), where TP, FP, and FN are the numbers of true positive, false positive, and false negative pixels, respectively. Predicted labels are evaluated per-vertex over the respective 3D scan mesh; for 3D approaches that operate on other representations like grids or points, the predicted labels should be mapped onto the mesh vertices (e.g., one such example for grid to mesh vertices is provided in the evaluation helpers).

This table lists the benchmark results for the 3D semantic label scenario.

| Method | Info | avg iou | bathtub | bed | bookshelf | cabinet | chair | counter | curtain | desk | door | floor | otherfurniture | picture | refrigerator | shower curtain | sink | sofa | table | toilet | wall | window |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Volt ScanNet | 0.805 1 | 0.932 5 | 0.846 3 | 0.801 49 | 0.775 10 | 0.862 11 | 0.604 1 | 0.955 1 | 0.779 1 | 0.722 4 | 0.980 1 | 0.635 1 | 0.352 12 | 0.799 3 | 0.941 4 | 0.887 1 | 0.807 20 | 0.748 2 | 0.973 3 | 0.911 1 | 0.798 6 | |

| Kadir Yilmaz, Adrian Kruse, Tristan Höfer, Daan de Geus, Bastian Leibe: Volume Transformer: Revisiting Vanilla Transformers for 3D Scene Understanding. | ||||||||||||||||||||||

| PTv3-PPT-ALC | 0.798 2 | 0.911 12 | 0.812 24 | 0.854 8 | 0.770 13 | 0.856 16 | 0.555 18 | 0.943 2 | 0.660 27 | 0.735 2 | 0.979 2 | 0.606 8 | 0.492 1 | 0.792 5 | 0.934 5 | 0.841 3 | 0.819 6 | 0.716 10 | 0.947 11 | 0.906 2 | 0.822 1 | |

| Guangda Ji, Silvan Weder, Francis Engelmann, Marc Pollefeys, Hermann Blum: ARKit LabelMaker: A New Scale for Indoor 3D Scene Understanding. CVPR 2025 | ||||||||||||||||||||||

| DITR ScanNet | 0.797 3 | 0.727 78 | 0.869 1 | 0.882 1 | 0.785 6 | 0.868 7 | 0.578 6 | 0.943 2 | 0.744 2 | 0.727 3 | 0.979 2 | 0.627 3 | 0.364 9 | 0.824 1 | 0.949 2 | 0.779 16 | 0.844 1 | 0.757 1 | 0.982 1 | 0.905 3 | 0.802 3 | |

| Karim Abou Zeid, Kadir Yilmaz, Daan de Geus, Alexander Hermans, David Adrian, Timm Linder, Bastian Leibe: DINO in the Room: Leveraging 2D Foundation Models for 3D Segmentation. 3DV 2026 | ||||||||||||||||||||||

| PTv3 ScanNet | 0.794 4 | 0.941 3 | 0.813 23 | 0.851 11 | 0.782 7 | 0.890 2 | 0.597 2 | 0.916 7 | 0.696 12 | 0.713 6 | 0.979 2 | 0.635 1 | 0.384 3 | 0.793 4 | 0.907 11 | 0.821 6 | 0.790 38 | 0.696 15 | 0.967 5 | 0.903 4 | 0.805 2 | |

| Xiaoyang Wu, Li Jiang, Peng-Shuai Wang, Zhijian Liu, Xihui Liu, Yu Qiao, Wanli Ouyang, Tong He, Hengshuang Zhao: Point Transformer V3: Simpler, Faster, Stronger. CVPR 2024 (Oral) | ||||||||||||||||||||||

| PonderV2 | 0.785 5 | 0.978 1 | 0.800 32 | 0.833 30 | 0.788 4 | 0.853 21 | 0.545 22 | 0.910 10 | 0.713 4 | 0.705 7 | 0.979 2 | 0.596 10 | 0.390 2 | 0.769 16 | 0.832 46 | 0.821 6 | 0.792 37 | 0.730 3 | 0.975 2 | 0.897 7 | 0.785 8 | |

| Haoyi Zhu, Honghui Yang, Xiaoyang Wu, Di Huang, Sha Zhang, Xianglong He, Tong He, Hengshuang Zhao, Chunhua Shen, Yu Qiao, Wanli Ouyang: PonderV2: Pave the Way for 3D Foundataion Model with A Universal Pre-training Paradigm. | ||||||||||||||||||||||

| Mix3D | 0.781 6 | 0.964 2 | 0.855 2 | 0.843 20 | 0.781 8 | 0.858 14 | 0.575 9 | 0.831 41 | 0.685 18 | 0.714 5 | 0.979 2 | 0.594 11 | 0.310 32 | 0.801 2 | 0.892 20 | 0.841 3 | 0.819 6 | 0.723 7 | 0.940 16 | 0.887 9 | 0.725 30 | |

| Alexey Nekrasov, Jonas Schult, Or Litany, Bastian Leibe, Francis Engelmann: Mix3D: Out-of-Context Data Augmentation for 3D Scenes. 3DV 2021 (Oral) | ||||||||||||||||||||||

| Swin3D | 0.779 7 | 0.861 25 | 0.818 18 | 0.836 27 | 0.790 3 | 0.875 4 | 0.576 8 | 0.905 11 | 0.704 8 | 0.739 1 | 0.969 13 | 0.611 4 | 0.349 13 | 0.756 26 | 0.958 1 | 0.702 53 | 0.805 21 | 0.708 11 | 0.916 40 | 0.898 6 | 0.801 4 | |

| TTT-KD | 0.773 8 | 0.646 99 | 0.818 18 | 0.809 42 | 0.774 11 | 0.878 3 | 0.581 4 | 0.943 2 | 0.687 16 | 0.704 8 | 0.978 7 | 0.607 7 | 0.336 21 | 0.775 12 | 0.912 9 | 0.838 5 | 0.823 4 | 0.694 16 | 0.967 5 | 0.899 5 | 0.794 7 | |

| Lisa Weijler, Muhammad Jehanzeb Mirza, Leon Sick, Can Ekkazan, Pedro Hermosilla: TTT-KD: Test-Time Training for 3D Semantic Segmentation through Knowledge Distillation from Foundation Models. | ||||||||||||||||||||||

| ResLFE_HDS | 0.772 9 | 0.939 4 | 0.824 8 | 0.854 8 | 0.771 12 | 0.840 36 | 0.564 14 | 0.900 13 | 0.686 17 | 0.677 15 | 0.961 19 | 0.537 37 | 0.348 14 | 0.769 16 | 0.903 13 | 0.785 14 | 0.815 9 | 0.676 27 | 0.939 17 | 0.880 14 | 0.772 12 | |

| OctFormer | 0.766 10 | 0.925 8 | 0.808 28 | 0.849 13 | 0.786 5 | 0.846 31 | 0.566 13 | 0.876 20 | 0.690 14 | 0.674 18 | 0.960 20 | 0.576 23 | 0.226 75 | 0.753 28 | 0.904 12 | 0.777 17 | 0.815 9 | 0.722 8 | 0.923 32 | 0.877 18 | 0.776 11 | |

| Peng-Shuai Wang: OctFormer: Octree-based Transformers for 3D Point Clouds. SIGGRAPH 2023 | ||||||||||||||||||||||

| PPT-SpUNet-Joint | 0.766 10 | 0.932 5 | 0.794 38 | 0.829 32 | 0.751 27 | 0.854 19 | 0.540 26 | 0.903 12 | 0.630 40 | 0.672 19 | 0.963 17 | 0.565 27 | 0.357 10 | 0.788 6 | 0.900 15 | 0.737 32 | 0.802 22 | 0.685 21 | 0.950 9 | 0.887 9 | 0.780 9 | |

| Xiaoyang Wu, Zhuotao Tian, Xin Wen, Bohao Peng, Xihui Liu, Kaicheng Yu, Hengshuang Zhao: Towards Large-scale 3D Representation Learning with Multi-dataset Point Prompt Training. CVPR 2024 | ||||||||||||||||||||||

| CU-Hybrid Net | 0.764 12 | 0.924 9 | 0.819 15 | 0.840 23 | 0.757 22 | 0.853 21 | 0.580 5 | 0.848 33 | 0.709 6 | 0.643 29 | 0.958 25 | 0.587 17 | 0.295 40 | 0.753 28 | 0.884 24 | 0.758 24 | 0.815 9 | 0.725 6 | 0.927 28 | 0.867 29 | 0.743 21 | |

| OccuSeg+Semantic | 0.764 12 | 0.758 63 | 0.796 36 | 0.839 24 | 0.746 31 | 0.907 1 | 0.562 15 | 0.850 32 | 0.680 20 | 0.672 19 | 0.978 7 | 0.610 5 | 0.335 23 | 0.777 10 | 0.819 50 | 0.847 2 | 0.830 3 | 0.691 18 | 0.972 4 | 0.885 11 | 0.727 28 | |

| O-CNN | 0.762 14 | 0.924 9 | 0.823 9 | 0.844 19 | 0.770 13 | 0.852 23 | 0.577 7 | 0.847 35 | 0.711 5 | 0.640 33 | 0.958 25 | 0.592 12 | 0.217 81 | 0.762 21 | 0.888 21 | 0.758 24 | 0.813 13 | 0.726 5 | 0.932 26 | 0.868 28 | 0.744 20 | |

| Peng-Shuai Wang, Yang Liu, Yu-Xiao Guo, Chun-Yu Sun, Xin Tong: O-CNN: Octree-based Convolutional Neural Networks for 3D Shape Analysis. SIGGRAPH 2017 | ||||||||||||||||||||||

| DiffSegNet | 0.758 15 | 0.725 80 | 0.789 43 | 0.843 20 | 0.762 18 | 0.856 16 | 0.562 15 | 0.920 5 | 0.657 30 | 0.658 23 | 0.958 25 | 0.589 15 | 0.337 20 | 0.782 7 | 0.879 25 | 0.787 12 | 0.779 43 | 0.678 23 | 0.926 30 | 0.880 14 | 0.799 5 | |

| DTC | 0.757 16 | 0.843 31 | 0.820 13 | 0.847 16 | 0.791 2 | 0.862 11 | 0.511 40 | 0.870 24 | 0.707 7 | 0.652 25 | 0.954 42 | 0.604 9 | 0.279 51 | 0.760 22 | 0.942 3 | 0.734 33 | 0.766 52 | 0.701 14 | 0.884 63 | 0.874 24 | 0.736 22 | |

| OA-CNN-L_ScanNet20 | 0.756 17 | 0.783 49 | 0.826 7 | 0.858 6 | 0.776 9 | 0.837 41 | 0.548 21 | 0.896 16 | 0.649 32 | 0.675 17 | 0.962 18 | 0.586 18 | 0.335 23 | 0.771 15 | 0.802 55 | 0.770 20 | 0.787 40 | 0.691 18 | 0.936 21 | 0.880 14 | 0.761 15 | |

| PNE | 0.755 18 | 0.786 47 | 0.835 6 | 0.834 29 | 0.758 20 | 0.849 26 | 0.570 11 | 0.836 40 | 0.648 33 | 0.668 21 | 0.978 7 | 0.581 21 | 0.367 7 | 0.683 41 | 0.856 34 | 0.804 9 | 0.801 26 | 0.678 23 | 0.961 7 | 0.889 8 | 0.716 37 | |

| P. Hermosilla: Point Neighborhood Embeddings. | ||||||||||||||||||||||

| LSK3DNet | 0.755 18 | 0.899 18 | 0.823 9 | 0.843 20 | 0.764 17 | 0.838 39 | 0.584 3 | 0.845 36 | 0.717 3 | 0.638 35 | 0.956 32 | 0.580 22 | 0.229 74 | 0.640 51 | 0.900 15 | 0.750 27 | 0.813 13 | 0.729 4 | 0.920 36 | 0.872 26 | 0.757 16 | |

| Tuo Feng, Wenguan Wang, Fan Ma, Yi Yang: LSK3DNet: Towards Effective and Efficient 3D Perception with Large Sparse Kernels. CVPR 2024 | ||||||||||||||||||||||

| ConDaFormer | 0.755 18 | 0.927 7 | 0.822 11 | 0.836 27 | 0.801 1 | 0.849 26 | 0.516 37 | 0.864 29 | 0.651 31 | 0.680 14 | 0.958 25 | 0.584 20 | 0.282 48 | 0.759 24 | 0.855 36 | 0.728 35 | 0.802 22 | 0.678 23 | 0.880 68 | 0.873 25 | 0.756 18 | |

| Lunhao Duan, Shanshan Zhao, Nan Xue, Mingming Gong, Guisong Xia, Dacheng Tao: ConDaFormer : Disassembled Transformer with Local Structure Enhancement for 3D Point Cloud Understanding. Neurips, 2023 | ||||||||||||||||||||||

| DMF-Net | 0.752 21 | 0.906 16 | 0.793 40 | 0.802 48 | 0.689 48 | 0.825 54 | 0.556 17 | 0.867 25 | 0.681 19 | 0.602 52 | 0.960 20 | 0.555 33 | 0.365 8 | 0.779 9 | 0.859 31 | 0.747 28 | 0.795 34 | 0.717 9 | 0.917 39 | 0.856 37 | 0.764 14 | |

| C.Yang, Y.Yan, W.Zhao, J.Ye, X.Yang, A.Hussain, B.Dong, K.Huang: Towards Deeper and Better Multi-view Feature Fusion for 3D Semantic Segmentation. ICONIP 2023 | ||||||||||||||||||||||

| PointTransformerV2 | 0.752 21 | 0.742 70 | 0.809 27 | 0.872 2 | 0.758 20 | 0.860 13 | 0.552 19 | 0.891 18 | 0.610 47 | 0.687 9 | 0.960 20 | 0.559 31 | 0.304 35 | 0.766 19 | 0.926 7 | 0.767 21 | 0.797 30 | 0.644 40 | 0.942 14 | 0.876 21 | 0.722 33 | |

| Xiaoyang Wu, Yixing Lao, Li Jiang, Xihui Liu, Hengshuang Zhao: Point Transformer V2: Grouped Vector Attention and Partition-based Pooling. NeurIPS 2022 | ||||||||||||||||||||||

| PointConvFormer | 0.749 23 | 0.793 45 | 0.790 41 | 0.807 44 | 0.750 29 | 0.856 16 | 0.524 33 | 0.881 19 | 0.588 60 | 0.642 32 | 0.977 11 | 0.591 13 | 0.274 54 | 0.781 8 | 0.929 6 | 0.804 9 | 0.796 31 | 0.642 41 | 0.947 11 | 0.885 11 | 0.715 38 | |

| Wenxuan Wu, Qi Shan, Li Fuxin: PointConvFormer: Revenge of the Point-based Convolution. | ||||||||||||||||||||||

| BPNet | 0.749 23 | 0.909 14 | 0.818 18 | 0.811 40 | 0.752 25 | 0.839 38 | 0.485 55 | 0.842 37 | 0.673 22 | 0.644 28 | 0.957 30 | 0.528 44 | 0.305 34 | 0.773 13 | 0.859 31 | 0.788 11 | 0.818 8 | 0.693 17 | 0.916 40 | 0.856 37 | 0.723 32 | |

| Wenbo Hu, Hengshuang Zhao, Li Jiang, Jiaya Jia, Tien-Tsin Wong: Bidirectional Projection Network for Cross Dimension Scene Understanding. CVPR 2021 (Oral) | ||||||||||||||||||||||

| MSP | 0.748 25 | 0.623 102 | 0.804 30 | 0.859 5 | 0.745 32 | 0.824 56 | 0.501 44 | 0.912 9 | 0.690 14 | 0.685 11 | 0.956 32 | 0.567 26 | 0.320 29 | 0.768 18 | 0.918 8 | 0.720 40 | 0.802 22 | 0.676 27 | 0.921 34 | 0.881 13 | 0.779 10 | |

| StratifiedFormer | 0.747 26 | 0.901 17 | 0.803 31 | 0.845 18 | 0.757 22 | 0.846 31 | 0.512 39 | 0.825 44 | 0.696 12 | 0.645 27 | 0.956 32 | 0.576 23 | 0.262 65 | 0.744 34 | 0.861 30 | 0.742 30 | 0.770 50 | 0.705 12 | 0.899 52 | 0.860 34 | 0.734 23 | |

| Xin Lai*, Jianhui Liu*, Li Jiang, Liwei Wang, Hengshuang Zhao, Shu Liu, Xiaojuan Qi, Jiaya Jia: Stratified Transformer for 3D Point Cloud Segmentation. CVPR 2022 | ||||||||||||||||||||||

| VMNet | 0.746 27 | 0.870 23 | 0.838 4 | 0.858 6 | 0.729 37 | 0.850 25 | 0.501 44 | 0.874 21 | 0.587 61 | 0.658 23 | 0.956 32 | 0.564 28 | 0.299 37 | 0.765 20 | 0.900 15 | 0.716 43 | 0.812 15 | 0.631 46 | 0.939 17 | 0.858 35 | 0.709 39 | |

| Zeyu HU, Xuyang Bai, Jiaxiang Shang, Runze Zhang, Jiayu Dong, Xin Wang, Guangyuan Sun, Hongbo Fu, Chiew-Lan Tai: VMNet: Voxel-Mesh Network for Geodesic-Aware 3D Semantic Segmentation. ICCV 2021 (Oral) | ||||||||||||||||||||||

| Virtual MVFusion | 0.746 27 | 0.771 57 | 0.819 15 | 0.848 15 | 0.702 44 | 0.865 10 | 0.397 93 | 0.899 14 | 0.699 10 | 0.664 22 | 0.948 64 | 0.588 16 | 0.330 25 | 0.746 33 | 0.851 40 | 0.764 22 | 0.796 31 | 0.704 13 | 0.935 22 | 0.866 30 | 0.728 26 | |

| Abhijit Kundu, Xiaoqi Yin, Alireza Fathi, David Ross, Brian Brewington, Thomas Funkhouser, Caroline Pantofaru: Virtual Multi-view Fusion for 3D Semantic Segmentation. ECCV 2020 | ||||||||||||||||||||||

| DiffSeg3D2 | 0.745 29 | 0.725 80 | 0.814 22 | 0.837 25 | 0.751 27 | 0.831 48 | 0.514 38 | 0.896 16 | 0.674 21 | 0.684 12 | 0.960 20 | 0.564 28 | 0.303 36 | 0.773 13 | 0.820 49 | 0.713 46 | 0.798 29 | 0.690 20 | 0.923 32 | 0.875 22 | 0.757 16 | |

| ODIN | 0.744 30 | 0.658 95 | 0.752 66 | 0.870 3 | 0.714 41 | 0.843 34 | 0.569 12 | 0.919 6 | 0.703 9 | 0.622 42 | 0.949 61 | 0.591 13 | 0.343 16 | 0.736 35 | 0.784 57 | 0.816 8 | 0.838 2 | 0.672 32 | 0.918 38 | 0.854 41 | 0.725 30 | |

| Ayush Jain, Pushkal Katara, Nikolaos Gkanatsios, Adam W. Harley, Gabriel Sarch, Kriti Aggarwal, Vishrav Chaudhary, Katerina Fragkiadaki: ODIN: A Single Model for 2D and 3D Segmentation. CVPR 2024 | ||||||||||||||||||||||

| Retro-FPN | 0.744 30 | 0.842 32 | 0.800 32 | 0.767 63 | 0.740 33 | 0.836 43 | 0.541 24 | 0.914 8 | 0.672 23 | 0.626 39 | 0.958 25 | 0.552 34 | 0.272 56 | 0.777 10 | 0.886 23 | 0.696 54 | 0.801 26 | 0.674 30 | 0.941 15 | 0.858 35 | 0.717 35 | |

| Peng Xiang*, Xin Wen*, Yu-Shen Liu, Hui Zhang, Yi Fang, Zhizhong Han: Retrospective Feature Pyramid Network for Point Cloud Semantic Segmentation. ICCV 2023 | ||||||||||||||||||||||

| EQ-Net | 0.743 32 | 0.620 103 | 0.799 35 | 0.849 13 | 0.730 36 | 0.822 58 | 0.493 52 | 0.897 15 | 0.664 24 | 0.681 13 | 0.955 36 | 0.562 30 | 0.378 4 | 0.760 22 | 0.903 13 | 0.738 31 | 0.801 26 | 0.673 31 | 0.907 44 | 0.877 18 | 0.745 19 | |

| Zetong Yang*, Li Jiang*, Yanan Sun, Bernt Schiele, Jiaya JIa: A Unified Query-based Paradigm for Point Cloud Understanding. CVPR 2022 | ||||||||||||||||||||||

| SAT | 0.742 33 | 0.860 26 | 0.765 57 | 0.819 35 | 0.769 15 | 0.848 28 | 0.533 28 | 0.829 42 | 0.663 25 | 0.631 38 | 0.955 36 | 0.586 18 | 0.274 54 | 0.753 28 | 0.896 18 | 0.729 34 | 0.760 58 | 0.666 34 | 0.921 34 | 0.855 39 | 0.733 24 | |

| LRPNet | 0.742 33 | 0.816 40 | 0.806 29 | 0.807 44 | 0.752 25 | 0.828 52 | 0.575 9 | 0.839 39 | 0.699 10 | 0.637 36 | 0.954 42 | 0.520 48 | 0.320 29 | 0.755 27 | 0.834 44 | 0.760 23 | 0.772 47 | 0.676 27 | 0.915 42 | 0.862 32 | 0.717 35 | |

| LargeKernel3D | 0.739 35 | 0.909 14 | 0.820 13 | 0.806 46 | 0.740 33 | 0.852 23 | 0.545 22 | 0.826 43 | 0.594 59 | 0.643 29 | 0.955 36 | 0.541 36 | 0.263 64 | 0.723 39 | 0.858 33 | 0.775 19 | 0.767 51 | 0.678 23 | 0.933 24 | 0.848 45 | 0.694 44 | |

| Yukang Chen*, Jianhui Liu*, Xiangyu Zhang, Xiaojuan Qi, Jiaya Jia: LargeKernel3D: Scaling up Kernels in 3D Sparse CNNs. CVPR 2023 | ||||||||||||||||||||||

| RPN | 0.736 36 | 0.776 53 | 0.790 41 | 0.851 11 | 0.754 24 | 0.854 19 | 0.491 54 | 0.866 27 | 0.596 58 | 0.686 10 | 0.955 36 | 0.536 38 | 0.342 17 | 0.624 58 | 0.869 27 | 0.787 12 | 0.802 22 | 0.628 47 | 0.927 28 | 0.875 22 | 0.704 41 | |

| MinkowskiNet | 0.736 36 | 0.859 27 | 0.818 18 | 0.832 31 | 0.709 42 | 0.840 36 | 0.521 35 | 0.853 31 | 0.660 27 | 0.643 29 | 0.951 53 | 0.544 35 | 0.286 46 | 0.731 37 | 0.893 19 | 0.675 63 | 0.772 47 | 0.683 22 | 0.874 75 | 0.852 43 | 0.727 28 | |

| C. Choy, J. Gwak, S. Savarese: 4D Spatio-Temporal ConvNets: Minkowski Convolutional Neural Networks. CVPR 2019 | ||||||||||||||||||||||

| IPCA | 0.731 38 | 0.890 19 | 0.837 5 | 0.864 4 | 0.726 38 | 0.873 5 | 0.530 32 | 0.824 45 | 0.489 95 | 0.647 26 | 0.978 7 | 0.609 6 | 0.336 21 | 0.624 58 | 0.733 65 | 0.758 24 | 0.776 45 | 0.570 73 | 0.949 10 | 0.877 18 | 0.728 26 | |

| MS-SFA-net | 0.730 39 | 0.910 13 | 0.819 15 | 0.837 25 | 0.698 45 | 0.838 39 | 0.532 30 | 0.872 22 | 0.605 51 | 0.676 16 | 0.959 24 | 0.535 40 | 0.341 18 | 0.649 47 | 0.598 89 | 0.708 48 | 0.810 16 | 0.664 36 | 0.895 55 | 0.879 17 | 0.771 13 | |

| online3d | 0.727 40 | 0.715 85 | 0.777 50 | 0.854 8 | 0.748 30 | 0.858 14 | 0.497 49 | 0.872 22 | 0.572 68 | 0.639 34 | 0.957 30 | 0.523 45 | 0.297 39 | 0.750 31 | 0.803 54 | 0.744 29 | 0.810 16 | 0.587 69 | 0.938 19 | 0.871 27 | 0.719 34 | |

| SparseConvNet | 0.725 41 | 0.647 98 | 0.821 12 | 0.846 17 | 0.721 39 | 0.869 6 | 0.533 28 | 0.754 66 | 0.603 54 | 0.614 44 | 0.955 36 | 0.572 25 | 0.325 27 | 0.710 40 | 0.870 26 | 0.724 38 | 0.823 4 | 0.628 47 | 0.934 23 | 0.865 31 | 0.683 47 | |

| PointTransformer++ | 0.725 41 | 0.727 78 | 0.811 26 | 0.819 35 | 0.765 16 | 0.841 35 | 0.502 43 | 0.814 50 | 0.621 43 | 0.623 41 | 0.955 36 | 0.556 32 | 0.284 47 | 0.620 60 | 0.866 28 | 0.781 15 | 0.757 62 | 0.648 38 | 0.932 26 | 0.862 32 | 0.709 39 | |

| MatchingNet | 0.724 43 | 0.812 42 | 0.812 24 | 0.810 41 | 0.735 35 | 0.834 45 | 0.495 51 | 0.860 30 | 0.572 68 | 0.602 52 | 0.954 42 | 0.512 50 | 0.280 50 | 0.757 25 | 0.845 42 | 0.725 37 | 0.780 42 | 0.606 57 | 0.937 20 | 0.851 44 | 0.700 43 | |

| INS-Conv-semantic | 0.717 44 | 0.751 66 | 0.759 60 | 0.812 39 | 0.704 43 | 0.868 7 | 0.537 27 | 0.842 37 | 0.609 49 | 0.608 48 | 0.953 46 | 0.534 41 | 0.293 41 | 0.616 61 | 0.864 29 | 0.719 42 | 0.793 35 | 0.640 42 | 0.933 24 | 0.845 49 | 0.663 53 | |

| PointMetaBase | 0.714 45 | 0.835 33 | 0.785 45 | 0.821 33 | 0.684 50 | 0.846 31 | 0.531 31 | 0.865 28 | 0.614 44 | 0.596 56 | 0.953 46 | 0.500 53 | 0.246 70 | 0.674 42 | 0.888 21 | 0.692 55 | 0.764 54 | 0.624 49 | 0.849 90 | 0.844 50 | 0.675 49 | |

| contrastBoundary | 0.705 46 | 0.769 60 | 0.775 51 | 0.809 42 | 0.687 49 | 0.820 61 | 0.439 81 | 0.812 51 | 0.661 26 | 0.591 58 | 0.945 72 | 0.515 49 | 0.171 100 | 0.633 55 | 0.856 34 | 0.720 40 | 0.796 31 | 0.668 33 | 0.889 60 | 0.847 46 | 0.689 45 | |

| Liyao Tang, Yibing Zhan, Zhe Chen, Baosheng Yu, Dacheng Tao: Contrastive Boundary Learning for Point Cloud Segmentation. CVPR2022 | ||||||||||||||||||||||

| ClickSeg_Semantic | 0.703 47 | 0.774 55 | 0.800 32 | 0.793 54 | 0.760 19 | 0.847 30 | 0.471 59 | 0.802 54 | 0.463 102 | 0.634 37 | 0.968 15 | 0.491 56 | 0.271 58 | 0.726 38 | 0.910 10 | 0.706 49 | 0.815 9 | 0.551 85 | 0.878 69 | 0.833 51 | 0.570 85 | |

| RFCR | 0.702 48 | 0.889 20 | 0.745 72 | 0.813 38 | 0.672 53 | 0.818 65 | 0.493 52 | 0.815 49 | 0.623 41 | 0.610 46 | 0.947 66 | 0.470 65 | 0.249 69 | 0.594 65 | 0.848 41 | 0.705 50 | 0.779 43 | 0.646 39 | 0.892 58 | 0.823 57 | 0.611 68 | |

| Jingyu Gong, Jiachen Xu, Xin Tan, Haichuan Song, Yanyun Qu, Yuan Xie, Lizhuang Ma: Omni-Supervised Point Cloud Segmentation via Gradual Receptive Field Component Reasoning. CVPR2021 | ||||||||||||||||||||||

| One Thing One Click | 0.701 49 | 0.825 37 | 0.796 36 | 0.723 70 | 0.716 40 | 0.832 47 | 0.433 83 | 0.816 47 | 0.634 38 | 0.609 47 | 0.969 13 | 0.418 91 | 0.344 15 | 0.559 77 | 0.833 45 | 0.715 44 | 0.808 19 | 0.560 79 | 0.902 49 | 0.847 46 | 0.680 48 | |

| JSENet | 0.699 50 | 0.881 22 | 0.762 58 | 0.821 33 | 0.667 54 | 0.800 78 | 0.522 34 | 0.792 57 | 0.613 45 | 0.607 49 | 0.935 92 | 0.492 55 | 0.205 87 | 0.576 70 | 0.853 38 | 0.691 57 | 0.758 60 | 0.652 37 | 0.872 78 | 0.828 54 | 0.649 57 | |

| Zeyu HU, Mingmin Zhen, Xuyang BAI, Hongbo Fu, Chiew-lan Tai: JSENet: Joint Semantic Segmentation and Edge Detection Network for 3D Point Clouds. ECCV 2020 | ||||||||||||||||||||||

| One-Thing-One-Click | 0.693 51 | 0.743 69 | 0.794 38 | 0.655 93 | 0.684 50 | 0.822 58 | 0.497 49 | 0.719 76 | 0.622 42 | 0.617 43 | 0.977 11 | 0.447 78 | 0.339 19 | 0.750 31 | 0.664 82 | 0.703 52 | 0.790 38 | 0.596 62 | 0.946 13 | 0.855 39 | 0.647 58 | |

| Zhengzhe Liu, Xiaojuan Qi, Chi-Wing Fu: One Thing One Click: A Self-Training Approach for Weakly Supervised 3D Semantic Segmentation. CVPR 2021 | ||||||||||||||||||||||

| PicassoNet-II | 0.692 52 | 0.732 74 | 0.772 52 | 0.786 55 | 0.677 52 | 0.866 9 | 0.517 36 | 0.848 33 | 0.509 88 | 0.626 39 | 0.952 51 | 0.536 38 | 0.225 77 | 0.545 83 | 0.704 72 | 0.689 60 | 0.810 16 | 0.564 78 | 0.903 48 | 0.854 41 | 0.729 25 | |

| Huan Lei, Naveed Akhtar, Mubarak Shah, and Ajmal Mian: Geometric feature learning for 3D meshes. | ||||||||||||||||||||||

| Feature_GeometricNet | 0.690 53 | 0.884 21 | 0.754 64 | 0.795 52 | 0.647 61 | 0.818 65 | 0.422 85 | 0.802 54 | 0.612 46 | 0.604 50 | 0.945 72 | 0.462 68 | 0.189 95 | 0.563 76 | 0.853 38 | 0.726 36 | 0.765 53 | 0.632 45 | 0.904 46 | 0.821 60 | 0.606 72 | |

| Kangcheng Liu, Ben M. Chen: https://arxiv.org/abs/2012.09439. arXiv Preprint | ||||||||||||||||||||||

| FusionNet | 0.688 54 | 0.704 87 | 0.741 76 | 0.754 67 | 0.656 56 | 0.829 50 | 0.501 44 | 0.741 71 | 0.609 49 | 0.548 66 | 0.950 57 | 0.522 47 | 0.371 5 | 0.633 55 | 0.756 60 | 0.715 44 | 0.771 49 | 0.623 50 | 0.861 86 | 0.814 63 | 0.658 54 | |

| Feihu Zhang, Jin Fang, Benjamin Wah, Philip Torr: Deep FusionNet for Point Cloud Semantic Segmentation. ECCV 2020 | ||||||||||||||||||||||

| Feature-Geometry Net | 0.685 55 | 0.866 24 | 0.748 69 | 0.819 35 | 0.645 63 | 0.794 81 | 0.450 71 | 0.802 54 | 0.587 61 | 0.604 50 | 0.945 72 | 0.464 67 | 0.201 90 | 0.554 79 | 0.840 43 | 0.723 39 | 0.732 73 | 0.602 60 | 0.907 44 | 0.822 59 | 0.603 75 | |

| KP-FCNN | 0.684 56 | 0.847 30 | 0.758 62 | 0.784 57 | 0.647 61 | 0.814 68 | 0.473 58 | 0.772 60 | 0.605 51 | 0.594 57 | 0.935 92 | 0.450 76 | 0.181 98 | 0.587 66 | 0.805 53 | 0.690 58 | 0.785 41 | 0.614 53 | 0.882 65 | 0.819 61 | 0.632 64 | |

| H. Thomas, C. Qi, J. Deschaud, B. Marcotegui, F. Goulette, L. Guibas.: KPConv: Flexible and Deformable Convolution for Point Clouds. ICCV 2019 | ||||||||||||||||||||||

| VACNN++ | 0.684 56 | 0.728 77 | 0.757 63 | 0.776 60 | 0.690 46 | 0.804 76 | 0.464 64 | 0.816 47 | 0.577 67 | 0.587 59 | 0.945 72 | 0.508 52 | 0.276 53 | 0.671 43 | 0.710 70 | 0.663 68 | 0.750 66 | 0.589 67 | 0.881 66 | 0.832 53 | 0.653 56 | |

| DGNet | 0.684 56 | 0.712 86 | 0.784 46 | 0.782 59 | 0.658 55 | 0.835 44 | 0.499 48 | 0.823 46 | 0.641 35 | 0.597 55 | 0.950 57 | 0.487 58 | 0.281 49 | 0.575 71 | 0.619 86 | 0.647 76 | 0.764 54 | 0.620 52 | 0.871 81 | 0.846 48 | 0.688 46 | |

| Superpoint Network | 0.683 59 | 0.851 29 | 0.728 80 | 0.800 51 | 0.653 58 | 0.806 74 | 0.468 61 | 0.804 52 | 0.572 68 | 0.602 52 | 0.946 69 | 0.453 75 | 0.239 73 | 0.519 88 | 0.822 47 | 0.689 60 | 0.762 57 | 0.595 64 | 0.895 55 | 0.827 55 | 0.630 65 | |

| PointContrast_LA_SEM | 0.683 59 | 0.757 64 | 0.784 46 | 0.786 55 | 0.639 65 | 0.824 56 | 0.408 88 | 0.775 59 | 0.604 53 | 0.541 68 | 0.934 96 | 0.532 42 | 0.269 60 | 0.552 80 | 0.777 58 | 0.645 79 | 0.793 35 | 0.640 42 | 0.913 43 | 0.824 56 | 0.671 50 | |

| VI-PointConv | 0.676 61 | 0.770 59 | 0.754 64 | 0.783 58 | 0.621 69 | 0.814 68 | 0.552 19 | 0.758 64 | 0.571 71 | 0.557 64 | 0.954 42 | 0.529 43 | 0.268 62 | 0.530 86 | 0.682 76 | 0.675 63 | 0.719 76 | 0.603 59 | 0.888 61 | 0.833 51 | 0.665 52 | |

| Xingyi Li, Wenxuan Wu, Xiaoli Z. Fern, Li Fuxin: The Devils in the Point Clouds: Studying the Robustness of Point Cloud Convolutions. | ||||||||||||||||||||||

| ROSMRF3D | 0.673 62 | 0.789 46 | 0.748 69 | 0.763 65 | 0.635 67 | 0.814 68 | 0.407 90 | 0.747 68 | 0.581 65 | 0.573 61 | 0.950 57 | 0.484 59 | 0.271 58 | 0.607 62 | 0.754 61 | 0.649 73 | 0.774 46 | 0.596 62 | 0.883 64 | 0.823 57 | 0.606 72 | |

| SALANet | 0.670 63 | 0.816 40 | 0.770 55 | 0.768 62 | 0.652 59 | 0.807 73 | 0.451 68 | 0.747 68 | 0.659 29 | 0.545 67 | 0.924 102 | 0.473 64 | 0.149 110 | 0.571 73 | 0.811 52 | 0.635 83 | 0.746 67 | 0.623 50 | 0.892 58 | 0.794 77 | 0.570 85 | |

| O3DSeg | 0.668 64 | 0.822 38 | 0.771 54 | 0.496 114 | 0.651 60 | 0.833 46 | 0.541 24 | 0.761 63 | 0.555 77 | 0.611 45 | 0.966 16 | 0.489 57 | 0.370 6 | 0.388 107 | 0.580 90 | 0.776 18 | 0.751 64 | 0.570 73 | 0.956 8 | 0.817 62 | 0.646 59 | |

| PointConv | 0.666 65 | 0.781 50 | 0.759 60 | 0.699 78 | 0.644 64 | 0.822 58 | 0.475 57 | 0.779 58 | 0.564 74 | 0.504 85 | 0.953 46 | 0.428 85 | 0.203 89 | 0.586 68 | 0.754 61 | 0.661 69 | 0.753 63 | 0.588 68 | 0.902 49 | 0.813 65 | 0.642 60 | |

| Wenxuan Wu, Zhongang Qi, Li Fuxin: PointConv: Deep Convolutional Networks on 3D Point Clouds. CVPR 2019 | ||||||||||||||||||||||

| PointASNL | 0.666 65 | 0.703 88 | 0.781 48 | 0.751 69 | 0.655 57 | 0.830 49 | 0.471 59 | 0.769 61 | 0.474 98 | 0.537 70 | 0.951 53 | 0.475 63 | 0.279 51 | 0.635 53 | 0.698 75 | 0.675 63 | 0.751 64 | 0.553 84 | 0.816 97 | 0.806 67 | 0.703 42 | |

| Xu Yan, Chaoda Zheng, Zhen Li, Sheng Wang, Shuguang Cui: PointASNL: Robust Point Clouds Processing using Nonlocal Neural Networks with Adaptive Sampling. CVPR 2020 | ||||||||||||||||||||||

| PPCNN++ | 0.663 67 | 0.746 67 | 0.708 83 | 0.722 71 | 0.638 66 | 0.820 61 | 0.451 68 | 0.566 104 | 0.599 56 | 0.541 68 | 0.950 57 | 0.510 51 | 0.313 31 | 0.648 49 | 0.819 50 | 0.616 88 | 0.682 91 | 0.590 66 | 0.869 82 | 0.810 66 | 0.656 55 | |

| Pyunghwan Ahn, Juyoung Yang, Eojindl Yi, Chanho Lee, Junmo Kim: Projection-based Point Convolution for Efficient Point Cloud Segmentation. IEEE Access | ||||||||||||||||||||||

| DCM-Net | 0.658 68 | 0.778 51 | 0.702 86 | 0.806 46 | 0.619 70 | 0.813 71 | 0.468 61 | 0.693 84 | 0.494 91 | 0.524 76 | 0.941 84 | 0.449 77 | 0.298 38 | 0.510 90 | 0.821 48 | 0.675 63 | 0.727 75 | 0.568 76 | 0.826 95 | 0.803 70 | 0.637 62 | |

| Jonas Schult*, Francis Engelmann*, Theodora Kontogianni, Bastian Leibe: DualConvMesh-Net: Joint Geodesic and Euclidean Convolutions on 3D Meshes. CVPR 2020 [Oral] | ||||||||||||||||||||||

| MVF-GNN | 0.658 68 | 0.558 110 | 0.751 67 | 0.655 93 | 0.690 46 | 0.722 103 | 0.453 67 | 0.867 25 | 0.579 66 | 0.576 60 | 0.893 114 | 0.523 45 | 0.293 41 | 0.733 36 | 0.571 92 | 0.692 55 | 0.659 98 | 0.606 57 | 0.875 72 | 0.804 69 | 0.668 51 | |

| HPGCNN | 0.656 70 | 0.698 90 | 0.743 74 | 0.650 95 | 0.564 87 | 0.820 61 | 0.505 42 | 0.758 64 | 0.631 39 | 0.479 89 | 0.945 72 | 0.480 61 | 0.226 75 | 0.572 72 | 0.774 59 | 0.690 58 | 0.735 71 | 0.614 53 | 0.853 89 | 0.776 92 | 0.597 78 | |

| Jisheng Dang, Qingyong Hu, Yulan Guo, Jun Yang: HPGCNN. | ||||||||||||||||||||||

| SAFNet-seg | 0.654 71 | 0.752 65 | 0.734 78 | 0.664 91 | 0.583 82 | 0.815 67 | 0.399 92 | 0.754 66 | 0.639 36 | 0.535 72 | 0.942 82 | 0.470 65 | 0.309 33 | 0.665 44 | 0.539 94 | 0.650 72 | 0.708 81 | 0.635 44 | 0.857 88 | 0.793 79 | 0.642 60 | |

| Linqing Zhao, Jiwen Lu, Jie Zhou: Similarity-Aware Fusion Network for 3D Semantic Segmentation. IROS 2021 | ||||||||||||||||||||||

| RandLA-Net | 0.645 72 | 0.778 51 | 0.731 79 | 0.699 78 | 0.577 83 | 0.829 50 | 0.446 73 | 0.736 72 | 0.477 97 | 0.523 78 | 0.945 72 | 0.454 72 | 0.269 60 | 0.484 97 | 0.749 64 | 0.618 86 | 0.738 69 | 0.599 61 | 0.827 94 | 0.792 82 | 0.621 67 | |

| PointConv-SFPN | 0.641 73 | 0.776 53 | 0.703 85 | 0.721 72 | 0.557 90 | 0.826 53 | 0.451 68 | 0.672 89 | 0.563 75 | 0.483 88 | 0.943 81 | 0.425 88 | 0.162 105 | 0.644 50 | 0.726 66 | 0.659 70 | 0.709 80 | 0.572 72 | 0.875 72 | 0.786 87 | 0.559 91 | |

| MVPNet | 0.641 73 | 0.831 34 | 0.715 81 | 0.671 88 | 0.590 78 | 0.781 87 | 0.394 94 | 0.679 86 | 0.642 34 | 0.553 65 | 0.937 89 | 0.462 68 | 0.256 66 | 0.649 47 | 0.406 107 | 0.626 84 | 0.691 88 | 0.666 34 | 0.877 70 | 0.792 82 | 0.608 71 | |

| Maximilian Jaritz, Jiayuan Gu, Hao Su: Multi-view PointNet for 3D Scene Understanding. GMDL Workshop, ICCV 2019 | ||||||||||||||||||||||

| PointMRNet | 0.640 75 | 0.717 84 | 0.701 87 | 0.692 81 | 0.576 84 | 0.801 77 | 0.467 63 | 0.716 77 | 0.563 75 | 0.459 95 | 0.953 46 | 0.429 84 | 0.169 102 | 0.581 69 | 0.854 37 | 0.605 89 | 0.710 78 | 0.550 86 | 0.894 57 | 0.793 79 | 0.575 83 | |

| FPConv | 0.639 76 | 0.785 48 | 0.760 59 | 0.713 76 | 0.603 73 | 0.798 79 | 0.392 96 | 0.534 109 | 0.603 54 | 0.524 76 | 0.948 64 | 0.457 70 | 0.250 68 | 0.538 84 | 0.723 68 | 0.598 93 | 0.696 86 | 0.614 53 | 0.872 78 | 0.799 72 | 0.567 88 | |

| Yiqun Lin, Zizheng Yan, Haibin Huang, Dong Du, Ligang Liu, Shuguang Cui, Xiaoguang Han: FPConv: Learning Local Flattening for Point Convolution. CVPR 2020 | ||||||||||||||||||||||

| PD-Net | 0.638 77 | 0.797 44 | 0.769 56 | 0.641 100 | 0.590 78 | 0.820 61 | 0.461 65 | 0.537 108 | 0.637 37 | 0.536 71 | 0.947 66 | 0.388 98 | 0.206 86 | 0.656 45 | 0.668 80 | 0.647 76 | 0.732 73 | 0.585 70 | 0.868 83 | 0.793 79 | 0.473 111 | |

| PointSPNet | 0.637 78 | 0.734 73 | 0.692 94 | 0.714 75 | 0.576 84 | 0.797 80 | 0.446 73 | 0.743 70 | 0.598 57 | 0.437 100 | 0.942 82 | 0.403 94 | 0.150 109 | 0.626 57 | 0.800 56 | 0.649 73 | 0.697 85 | 0.557 82 | 0.846 91 | 0.777 91 | 0.563 89 | |

| SConv | 0.636 79 | 0.830 35 | 0.697 90 | 0.752 68 | 0.572 86 | 0.780 89 | 0.445 75 | 0.716 77 | 0.529 81 | 0.530 73 | 0.951 53 | 0.446 79 | 0.170 101 | 0.507 92 | 0.666 81 | 0.636 82 | 0.682 91 | 0.541 92 | 0.886 62 | 0.799 72 | 0.594 79 | |

| Supervoxel-CNN | 0.635 80 | 0.656 96 | 0.711 82 | 0.719 73 | 0.613 71 | 0.757 98 | 0.444 78 | 0.765 62 | 0.534 80 | 0.566 62 | 0.928 100 | 0.478 62 | 0.272 56 | 0.636 52 | 0.531 96 | 0.664 67 | 0.645 102 | 0.508 100 | 0.864 85 | 0.792 82 | 0.611 68 | |

| joint point-based | 0.634 81 | 0.614 104 | 0.778 49 | 0.667 90 | 0.633 68 | 0.825 54 | 0.420 86 | 0.804 52 | 0.467 100 | 0.561 63 | 0.951 53 | 0.494 54 | 0.291 43 | 0.566 74 | 0.458 102 | 0.579 99 | 0.764 54 | 0.559 81 | 0.838 92 | 0.814 63 | 0.598 77 | |

| Hung-Yueh Chiang, Yen-Liang Lin, Yueh-Cheng Liu, Winston H. Hsu: A Unified Point-Based Framework for 3D Segmentation. 3DV 2019 | ||||||||||||||||||||||

| PointMTL | 0.632 82 | 0.731 75 | 0.688 97 | 0.675 85 | 0.591 77 | 0.784 86 | 0.444 78 | 0.565 105 | 0.610 47 | 0.492 86 | 0.949 61 | 0.456 71 | 0.254 67 | 0.587 66 | 0.706 71 | 0.599 92 | 0.665 97 | 0.612 56 | 0.868 83 | 0.791 85 | 0.579 82 | |

| 3DSM_DMMF | 0.631 83 | 0.626 101 | 0.745 72 | 0.801 49 | 0.607 72 | 0.751 99 | 0.506 41 | 0.729 75 | 0.565 73 | 0.491 87 | 0.866 117 | 0.434 80 | 0.197 93 | 0.595 64 | 0.630 85 | 0.709 47 | 0.705 83 | 0.560 79 | 0.875 72 | 0.740 102 | 0.491 106 | |

| APCF-Net | 0.631 83 | 0.742 70 | 0.687 99 | 0.672 86 | 0.557 90 | 0.792 84 | 0.408 88 | 0.665 91 | 0.545 78 | 0.508 82 | 0.952 51 | 0.428 85 | 0.186 96 | 0.634 54 | 0.702 73 | 0.620 85 | 0.706 82 | 0.555 83 | 0.873 76 | 0.798 74 | 0.581 81 | |

| Haojia, Lin: Adaptive Pyramid Context Fusion for Point Cloud Perception. GRSL | ||||||||||||||||||||||

| PointNet2-SFPN | 0.631 83 | 0.771 57 | 0.692 94 | 0.672 86 | 0.524 96 | 0.837 41 | 0.440 80 | 0.706 82 | 0.538 79 | 0.446 97 | 0.944 78 | 0.421 90 | 0.219 80 | 0.552 80 | 0.751 63 | 0.591 95 | 0.737 70 | 0.543 91 | 0.901 51 | 0.768 94 | 0.557 92 | |

| FusionAwareConv | 0.630 86 | 0.604 106 | 0.741 76 | 0.766 64 | 0.590 78 | 0.747 100 | 0.501 44 | 0.734 73 | 0.503 90 | 0.527 74 | 0.919 106 | 0.454 72 | 0.323 28 | 0.550 82 | 0.420 106 | 0.678 62 | 0.688 89 | 0.544 89 | 0.896 54 | 0.795 76 | 0.627 66 | |

| Jiazhao Zhang, Chenyang Zhu, Lintao Zheng, Kai Xu: Fusion-Aware Point Convolution for Online Semantic 3D Scene Segmentation. CVPR 2020 | ||||||||||||||||||||||

| DenSeR | 0.628 87 | 0.800 43 | 0.625 109 | 0.719 73 | 0.545 93 | 0.806 74 | 0.445 75 | 0.597 99 | 0.448 105 | 0.519 80 | 0.938 88 | 0.481 60 | 0.328 26 | 0.489 96 | 0.499 101 | 0.657 71 | 0.759 59 | 0.592 65 | 0.881 66 | 0.797 75 | 0.634 63 | |

| SegGroup_sem | 0.627 88 | 0.818 39 | 0.747 71 | 0.701 77 | 0.602 74 | 0.764 95 | 0.385 100 | 0.629 96 | 0.490 93 | 0.508 82 | 0.931 99 | 0.409 93 | 0.201 90 | 0.564 75 | 0.725 67 | 0.618 86 | 0.692 87 | 0.539 93 | 0.873 76 | 0.794 77 | 0.548 95 | |

| An Tao, Yueqi Duan, Yi Wei, Jiwen Lu, Jie Zhou: SegGroup: Seg-Level Supervision for 3D Instance and Semantic Segmentation. TIP 2022 | ||||||||||||||||||||||

| dtc_net | 0.625 89 | 0.703 88 | 0.751 67 | 0.794 53 | 0.535 94 | 0.848 28 | 0.480 56 | 0.676 88 | 0.528 82 | 0.469 92 | 0.944 78 | 0.454 72 | 0.004 122 | 0.464 99 | 0.636 84 | 0.704 51 | 0.758 60 | 0.548 88 | 0.924 31 | 0.787 86 | 0.492 105 | |

| SIConv | 0.625 89 | 0.830 35 | 0.694 92 | 0.757 66 | 0.563 88 | 0.772 93 | 0.448 72 | 0.647 94 | 0.520 84 | 0.509 81 | 0.949 61 | 0.431 83 | 0.191 94 | 0.496 94 | 0.614 87 | 0.647 76 | 0.672 95 | 0.535 96 | 0.876 71 | 0.783 88 | 0.571 84 | |

| Weakly-Openseg v3 | 0.625 89 | 0.924 9 | 0.787 44 | 0.620 102 | 0.555 92 | 0.811 72 | 0.393 95 | 0.666 90 | 0.382 113 | 0.520 79 | 0.953 46 | 0.250 117 | 0.208 84 | 0.604 63 | 0.670 78 | 0.644 80 | 0.742 68 | 0.538 94 | 0.919 37 | 0.803 70 | 0.513 103 | |

| HPEIN | 0.618 92 | 0.729 76 | 0.668 100 | 0.647 97 | 0.597 76 | 0.766 94 | 0.414 87 | 0.680 85 | 0.520 84 | 0.525 75 | 0.946 69 | 0.432 81 | 0.215 82 | 0.493 95 | 0.599 88 | 0.638 81 | 0.617 107 | 0.570 73 | 0.897 53 | 0.806 67 | 0.605 74 | |

| Li Jiang, Hengshuang Zhao, Shu Liu, Xiaoyong Shen, Chi-Wing Fu, Jiaya Jia: Hierarchical Point-Edge Interaction Network for Point Cloud Semantic Segmentation. ICCV 2019 | ||||||||||||||||||||||

| SPH3D-GCN | 0.610 93 | 0.858 28 | 0.772 52 | 0.489 115 | 0.532 95 | 0.792 84 | 0.404 91 | 0.643 95 | 0.570 72 | 0.507 84 | 0.935 92 | 0.414 92 | 0.046 119 | 0.510 90 | 0.702 73 | 0.602 91 | 0.705 83 | 0.549 87 | 0.859 87 | 0.773 93 | 0.534 98 | |

| Huan Lei, Naveed Akhtar, and Ajmal Mian: Spherical Kernel for Efficient Graph Convolution on 3D Point Clouds. TPAMI 2020 | ||||||||||||||||||||||

| AttAN | 0.609 94 | 0.760 62 | 0.667 101 | 0.649 96 | 0.521 97 | 0.793 82 | 0.457 66 | 0.648 93 | 0.528 82 | 0.434 102 | 0.947 66 | 0.401 95 | 0.153 108 | 0.454 100 | 0.721 69 | 0.648 75 | 0.717 77 | 0.536 95 | 0.904 46 | 0.765 95 | 0.485 107 | |

| Gege Zhang, Qinghua Ma, Licheng Jiao, Fang Liu and Qigong Sun: AttAN: Attention Adversarial Networks for 3D Point Cloud Semantic Segmentation. IJCAI2020 | ||||||||||||||||||||||

| wsss-transformer | 0.600 95 | 0.634 100 | 0.743 74 | 0.697 80 | 0.601 75 | 0.781 87 | 0.437 82 | 0.585 102 | 0.493 92 | 0.446 97 | 0.933 97 | 0.394 96 | 0.011 121 | 0.654 46 | 0.661 83 | 0.603 90 | 0.733 72 | 0.526 97 | 0.832 93 | 0.761 97 | 0.480 108 | |

| LAP-D | 0.594 96 | 0.720 82 | 0.692 94 | 0.637 101 | 0.456 106 | 0.773 92 | 0.391 98 | 0.730 74 | 0.587 61 | 0.445 99 | 0.940 86 | 0.381 99 | 0.288 44 | 0.434 103 | 0.453 104 | 0.591 95 | 0.649 100 | 0.581 71 | 0.777 101 | 0.749 101 | 0.610 70 | |

| DPC | 0.592 97 | 0.720 82 | 0.700 88 | 0.602 106 | 0.480 102 | 0.762 97 | 0.380 101 | 0.713 80 | 0.585 64 | 0.437 100 | 0.940 86 | 0.369 101 | 0.288 44 | 0.434 103 | 0.509 100 | 0.590 97 | 0.639 105 | 0.567 77 | 0.772 102 | 0.755 99 | 0.592 80 | |

| Francis Engelmann, Theodora Kontogianni, Bastian Leibe: Dilated Point Convolutions: On the Receptive Field Size of Point Convolutions on 3D Point Clouds. ICRA 2020 | ||||||||||||||||||||||

| CCRFNet | 0.589 98 | 0.766 61 | 0.659 104 | 0.683 83 | 0.470 105 | 0.740 102 | 0.387 99 | 0.620 98 | 0.490 93 | 0.476 90 | 0.922 104 | 0.355 104 | 0.245 71 | 0.511 89 | 0.511 99 | 0.571 100 | 0.643 103 | 0.493 104 | 0.872 78 | 0.762 96 | 0.600 76 | |

| ROSMRF | 0.580 99 | 0.772 56 | 0.707 84 | 0.681 84 | 0.563 88 | 0.764 95 | 0.362 103 | 0.515 110 | 0.465 101 | 0.465 94 | 0.936 91 | 0.427 87 | 0.207 85 | 0.438 101 | 0.577 91 | 0.536 103 | 0.675 94 | 0.486 105 | 0.723 108 | 0.779 89 | 0.524 100 | |

| SD-DETR | 0.576 100 | 0.746 67 | 0.609 113 | 0.445 119 | 0.517 98 | 0.643 114 | 0.366 102 | 0.714 79 | 0.456 103 | 0.468 93 | 0.870 116 | 0.432 81 | 0.264 63 | 0.558 78 | 0.674 77 | 0.586 98 | 0.688 89 | 0.482 106 | 0.739 106 | 0.733 104 | 0.537 97 | |

| SQN_0.1% | 0.569 101 | 0.676 92 | 0.696 91 | 0.657 92 | 0.497 99 | 0.779 90 | 0.424 84 | 0.548 106 | 0.515 86 | 0.376 107 | 0.902 113 | 0.422 89 | 0.357 10 | 0.379 108 | 0.456 103 | 0.596 94 | 0.659 98 | 0.544 89 | 0.685 111 | 0.665 115 | 0.556 93 | |

| TextureNet | 0.566 102 | 0.672 94 | 0.664 102 | 0.671 88 | 0.494 100 | 0.719 104 | 0.445 75 | 0.678 87 | 0.411 111 | 0.396 105 | 0.935 92 | 0.356 103 | 0.225 77 | 0.412 105 | 0.535 95 | 0.565 101 | 0.636 106 | 0.464 108 | 0.794 100 | 0.680 112 | 0.568 87 | |

| Jingwei Huang, Haotian Zhang, Li Yi, Thomas Funkerhouser, Matthias Niessner, Leonidas Guibas: TextureNet: Consistent Local Parametrizations for Learning from High-Resolution Signals on Meshes. CVPR | ||||||||||||||||||||||

| DVVNet | 0.562 103 | 0.648 97 | 0.700 88 | 0.770 61 | 0.586 81 | 0.687 108 | 0.333 107 | 0.650 92 | 0.514 87 | 0.475 91 | 0.906 110 | 0.359 102 | 0.223 79 | 0.340 110 | 0.442 105 | 0.422 114 | 0.668 96 | 0.501 101 | 0.708 109 | 0.779 89 | 0.534 98 | |

| Pointnet++ & Feature | 0.557 104 | 0.735 72 | 0.661 103 | 0.686 82 | 0.491 101 | 0.744 101 | 0.392 96 | 0.539 107 | 0.451 104 | 0.375 108 | 0.946 69 | 0.376 100 | 0.205 87 | 0.403 106 | 0.356 110 | 0.553 102 | 0.643 103 | 0.497 102 | 0.824 96 | 0.756 98 | 0.515 101 | |

| GMLPs | 0.538 105 | 0.495 115 | 0.693 93 | 0.647 97 | 0.471 104 | 0.793 82 | 0.300 110 | 0.477 111 | 0.505 89 | 0.358 109 | 0.903 112 | 0.327 107 | 0.081 116 | 0.472 98 | 0.529 97 | 0.448 112 | 0.710 78 | 0.509 98 | 0.746 104 | 0.737 103 | 0.554 94 | |

| PanopticFusion-label | 0.529 106 | 0.491 116 | 0.688 97 | 0.604 105 | 0.386 111 | 0.632 115 | 0.225 121 | 0.705 83 | 0.434 108 | 0.293 115 | 0.815 119 | 0.348 105 | 0.241 72 | 0.499 93 | 0.669 79 | 0.507 105 | 0.649 100 | 0.442 114 | 0.796 99 | 0.602 119 | 0.561 90 | |

| Gaku Narita, Takashi Seno, Tomoya Ishikawa, Yohsuke Kaji: PanopticFusion: Online Volumetric Semantic Mapping at the Level of Stuff and Things. IROS 2019 (to appear) | ||||||||||||||||||||||

| subcloud_weak | 0.516 107 | 0.676 92 | 0.591 116 | 0.609 103 | 0.442 107 | 0.774 91 | 0.335 106 | 0.597 99 | 0.422 110 | 0.357 110 | 0.932 98 | 0.341 106 | 0.094 115 | 0.298 112 | 0.528 98 | 0.473 110 | 0.676 93 | 0.495 103 | 0.602 117 | 0.721 107 | 0.349 119 | |

| Online SegFusion | 0.515 108 | 0.607 105 | 0.644 107 | 0.579 108 | 0.434 108 | 0.630 116 | 0.353 104 | 0.628 97 | 0.440 106 | 0.410 103 | 0.762 122 | 0.307 109 | 0.167 103 | 0.520 87 | 0.403 108 | 0.516 104 | 0.565 110 | 0.447 112 | 0.678 112 | 0.701 109 | 0.514 102 | |

| Davide Menini, Suryansh Kumar, Martin R. Oswald, Erik Sandstroem, Cristian Sminchisescu, Luc van Gool: A Real-Time Learning Framework for Joint 3D Reconstruction and Semantic Segmentation. Robotics and Automation Letters Submission | ||||||||||||||||||||||

| 3DMV, FTSDF | 0.501 109 | 0.558 110 | 0.608 114 | 0.424 121 | 0.478 103 | 0.690 107 | 0.246 117 | 0.586 101 | 0.468 99 | 0.450 96 | 0.911 108 | 0.394 96 | 0.160 106 | 0.438 101 | 0.212 117 | 0.432 113 | 0.541 115 | 0.475 107 | 0.742 105 | 0.727 105 | 0.477 109 | |

| PCNN | 0.498 110 | 0.559 109 | 0.644 107 | 0.560 110 | 0.420 110 | 0.711 106 | 0.229 119 | 0.414 112 | 0.436 107 | 0.352 111 | 0.941 84 | 0.324 108 | 0.155 107 | 0.238 117 | 0.387 109 | 0.493 106 | 0.529 116 | 0.509 98 | 0.813 98 | 0.751 100 | 0.504 104 | |

| 3DMV | 0.484 111 | 0.484 117 | 0.538 119 | 0.643 99 | 0.424 109 | 0.606 119 | 0.310 108 | 0.574 103 | 0.433 109 | 0.378 106 | 0.796 120 | 0.301 110 | 0.214 83 | 0.537 85 | 0.208 118 | 0.472 111 | 0.507 119 | 0.413 117 | 0.693 110 | 0.602 119 | 0.539 96 | |

| Angela Dai, Matthias Niessner: 3DMV: Joint 3D-Multi-View Prediction for 3D Semantic Scene Segmentation. ECCV'18 | ||||||||||||||||||||||

| PointCNN with RGB | 0.458 112 | 0.577 108 | 0.611 112 | 0.356 123 | 0.321 119 | 0.715 105 | 0.299 112 | 0.376 116 | 0.328 119 | 0.319 113 | 0.944 78 | 0.285 112 | 0.164 104 | 0.216 120 | 0.229 115 | 0.484 108 | 0.545 114 | 0.456 110 | 0.755 103 | 0.709 108 | 0.475 110 | |

| Yangyan Li, Rui Bu, Mingchao Sun, Baoquan Chen: PointCNN. NeurIPS 2018 | ||||||||||||||||||||||

| FCPN | 0.447 113 | 0.679 91 | 0.604 115 | 0.578 109 | 0.380 112 | 0.682 109 | 0.291 113 | 0.106 123 | 0.483 96 | 0.258 121 | 0.920 105 | 0.258 116 | 0.025 120 | 0.231 119 | 0.325 111 | 0.480 109 | 0.560 112 | 0.463 109 | 0.725 107 | 0.666 114 | 0.231 123 | |

| Dario Rethage, Johanna Wald, Jürgen Sturm, Nassir Navab, Federico Tombari: Fully-Convolutional Point Networks for Large-Scale Point Clouds. ECCV 2018 | ||||||||||||||||||||||

| DGCNN_reproduce | 0.446 114 | 0.474 118 | 0.623 110 | 0.463 117 | 0.366 114 | 0.651 112 | 0.310 108 | 0.389 115 | 0.349 117 | 0.330 112 | 0.937 89 | 0.271 114 | 0.126 112 | 0.285 113 | 0.224 116 | 0.350 119 | 0.577 109 | 0.445 113 | 0.625 115 | 0.723 106 | 0.394 115 | |

| Yue Wang, Yongbin Sun, Ziwei Liu, Sanjay E. Sarma, Michael M. Bronstein, Justin M. Solomon: Dynamic Graph CNN for Learning on Point Clouds. TOG 2019 | ||||||||||||||||||||||

| PNET2 | 0.442 115 | 0.548 112 | 0.548 118 | 0.597 107 | 0.363 115 | 0.628 117 | 0.300 110 | 0.292 118 | 0.374 114 | 0.307 114 | 0.881 115 | 0.268 115 | 0.186 96 | 0.238 117 | 0.204 119 | 0.407 115 | 0.506 120 | 0.449 111 | 0.667 113 | 0.620 118 | 0.462 113 | |

| SurfaceConvPF | 0.442 115 | 0.505 114 | 0.622 111 | 0.380 122 | 0.342 117 | 0.654 111 | 0.227 120 | 0.397 114 | 0.367 115 | 0.276 117 | 0.924 102 | 0.240 118 | 0.198 92 | 0.359 109 | 0.262 113 | 0.366 116 | 0.581 108 | 0.435 115 | 0.640 114 | 0.668 113 | 0.398 114 | |

| Hao Pan, Shilin Liu, Yang Liu, Xin Tong: Convolutional Neural Networks on 3D Surfaces Using Parallel Frames. | ||||||||||||||||||||||

| Tangent Convolutions | 0.438 117 | 0.437 120 | 0.646 106 | 0.474 116 | 0.369 113 | 0.645 113 | 0.353 104 | 0.258 120 | 0.282 122 | 0.279 116 | 0.918 107 | 0.298 111 | 0.147 111 | 0.283 114 | 0.294 112 | 0.487 107 | 0.562 111 | 0.427 116 | 0.619 116 | 0.633 117 | 0.352 118 | |

| Maxim Tatarchenko, Jaesik Park, Vladlen Koltun, Qian-Yi Zhou: Tangent convolutions for dense prediction in 3d. CVPR 2018 | ||||||||||||||||||||||

| 3DWSSS | 0.425 118 | 0.525 113 | 0.647 105 | 0.522 111 | 0.324 118 | 0.488 123 | 0.077 124 | 0.712 81 | 0.353 116 | 0.401 104 | 0.636 124 | 0.281 113 | 0.176 99 | 0.340 110 | 0.565 93 | 0.175 123 | 0.551 113 | 0.398 118 | 0.370 124 | 0.602 119 | 0.361 117 | |

| SPLAT Net | 0.393 119 | 0.472 119 | 0.511 120 | 0.606 104 | 0.311 120 | 0.656 110 | 0.245 118 | 0.405 113 | 0.328 119 | 0.197 122 | 0.927 101 | 0.227 120 | 0.000 124 | 0.001 125 | 0.249 114 | 0.271 122 | 0.510 117 | 0.383 120 | 0.593 118 | 0.699 110 | 0.267 121 | |

| Hang Su, Varun Jampani, Deqing Sun, Subhransu Maji, Evangelos Kalogerakis, Ming-Hsuan Yang, Jan Kautz: SPLATNet: Sparse Lattice Networks for Point Cloud Processing. CVPR 2018 | ||||||||||||||||||||||

| ScanNet+FTSDF | 0.383 120 | 0.297 122 | 0.491 121 | 0.432 120 | 0.358 116 | 0.612 118 | 0.274 115 | 0.116 122 | 0.411 111 | 0.265 118 | 0.904 111 | 0.229 119 | 0.079 117 | 0.250 115 | 0.185 120 | 0.320 120 | 0.510 117 | 0.385 119 | 0.548 119 | 0.597 122 | 0.394 115 | |

| PointNet++ | 0.339 121 | 0.584 107 | 0.478 122 | 0.458 118 | 0.256 122 | 0.360 124 | 0.250 116 | 0.247 121 | 0.278 123 | 0.261 120 | 0.677 123 | 0.183 121 | 0.117 113 | 0.212 121 | 0.145 122 | 0.364 117 | 0.346 124 | 0.232 124 | 0.548 119 | 0.523 123 | 0.252 122 | |

| Charles R. Qi, Li Yi, Hao Su, Leonidas J. Guibas: pointnet++: deep hierarchical feature learning on point sets in a metric space. | ||||||||||||||||||||||

| GrowSP++ | 0.323 122 | 0.114 124 | 0.589 117 | 0.499 113 | 0.147 124 | 0.555 120 | 0.290 114 | 0.336 117 | 0.290 121 | 0.262 119 | 0.865 118 | 0.102 124 | 0.000 124 | 0.037 123 | 0.000 125 | 0.000 125 | 0.462 121 | 0.381 121 | 0.389 123 | 0.664 116 | 0.473 111 | |

| SSC-UNet | 0.308 123 | 0.353 121 | 0.290 124 | 0.278 124 | 0.166 123 | 0.553 121 | 0.169 123 | 0.286 119 | 0.147 124 | 0.148 124 | 0.908 109 | 0.182 122 | 0.064 118 | 0.023 124 | 0.018 124 | 0.354 118 | 0.363 122 | 0.345 122 | 0.546 121 | 0.685 111 | 0.278 120 | |

| ScanNet | 0.306 124 | 0.203 123 | 0.366 123 | 0.501 112 | 0.311 120 | 0.524 122 | 0.211 122 | 0.002 125 | 0.342 118 | 0.189 123 | 0.786 121 | 0.145 123 | 0.102 114 | 0.245 116 | 0.152 121 | 0.318 121 | 0.348 123 | 0.300 123 | 0.460 122 | 0.437 124 | 0.182 124 | |

| Angela Dai, Angel X. Chang, Manolis Savva, Maciej Halber, Thomas Funkhouser, Matthias Nießner: ScanNet: Richly-annotated 3D Reconstructions of Indoor Scenes. CVPR'17 | ||||||||||||||||||||||

| ERROR | 0.054 125 | 0.000 125 | 0.041 125 | 0.172 125 | 0.030 125 | 0.062 125 | 0.001 125 | 0.035 124 | 0.004 125 | 0.051 125 | 0.143 125 | 0.019 125 | 0.003 123 | 0.041 122 | 0.050 123 | 0.003 124 | 0.054 125 | 0.018 125 | 0.005 125 | 0.264 125 | 0.082 125 | |